Mythos and the Evolving Cyber Landscape: Implications and Policy Priorities

On April 7, Anthropic announced Claude Mythos Preview, its most cyber-capable model to date. To mitigate risks from broad access, Anthropic also launched Project Glasswing, a differential access program that limits Mythos to a few major technology companies. Anthropic’s internal assessments and independent evaluations point to significant advances in vulnerability discovery, exploit generation, and automated attack capabilities.

Policymakers should treat Mythos—and the accelerating trajectory of AI cyber capabilities—as a national security risk requiring urgent action. Models throughout 2025 already demonstrated sophisticated cyber capabilities, and Opus 4.6 marked a notable jump when it released in February 2026. Mythos, just two months later, appears to represent another leap. It's unlikely these capabilities will plateau. The UK government assesses capabilities are doubling every four months, up from eight months previously. Nor will they stay exclusive to Anthropic or the United States. Other AI labs are developing similar products, and Chinese AI models are estimated to be seven months behind American models.

The central national security risk is that cyber defenders cannot keep pace with attackers, who have stronger incentives and fewer barriers to automate their operations. Key Takeaways for Policymakers:

Accelerated vulnerability discovery could create systemic risk if remediation cannot keep pace: AI models are discovering vulnerabilities at increasing rates, but downstream defenders already struggle to apply patches, with many systems remaining unpatched for months or years. Policymakers should sustain federal remediation programs and drive the development of more secure software.

The security of critical software may be left behind: Open-source software is foundational for the modern software ecosystem and operational technology (OT) runs critical infrastructure. But these developers face resource constraints or may not have access to models like Mythos. Policymakers should fund vulnerability discovery for open-source software and expand initiatives like Project Glasswing for OT systems.

Emerging autonomous attack capabilities could prey on poor security: Mythos demonstrated a capability jump in automated attacks. These attacks will be highly opportunistic, exploiting years of underinvestment in security and remediation backlogs. Policymakers should support defensive automation and incident response.

Compute will provide adversaries with strategic and operational cyber advantages: Compute enables both training more capable models and improving performance on complex cyber tasks. Policymakers should maintain export controls and protect the infrastructure that sustains America's AI advantage.

Reported Cyber Capability Progress and Implications

Vulnerability Discovery and Exploitation: Mythos shows significant improvement over already capable models

Anthropic’s assessments indicate that Mythos Preview's most significant capability advance is in discovering unknown software vulnerabilities — flaws in code that attackers exploit to compromise systems.¹ Vulnerabilities are endemic to software,² and remain one of cybersecurity’s most persistent challenges. Some of the most damaging cyber attacks of the past decade exploited these flaws, including the WannaCry and NotPetya attacks. High-risk vulnerabilities in widely used software, such as Log4j in 2021, can put millions of systems at risk overnight. For years, finding vulnerabilities has been slow, manual, and expensive, requiring skilled experts to analyze and test code.

AI vulnerability discovery capabilities were advancing rapidly³ before Mythos. The first unknown vulnerability discovered by an AI model was in 2024, from Google’s Project Zero. Through most of 2025, AI-discovered vulnerabilities were reported in single or double digits. Opus 4.6, released in February 2026, found over 500 vulnerabilities across open-source software. Anthropic reports that Mythos has discovered thousands of high- or critical-severity vulnerabilities,⁴ including flaws in every major operating system and web browser.

To leverage vulnerabilities, attackers must develop working exploits, which act as the crowbar for breaking into vulnerable systems. Generating a sophisticated exploit can be labor intensive, taking humans days or weeks. Researchers have already used older models to generate sophisticated exploits in hours with targeted prompting. Anthropic’s assessment indicates that Mythos is significantly more capable at exploit generation than previous models. In one assessment, Mythos reportedly chained four separate vulnerabilities to compromise a web browser’s security, including escaping the browser’s sandbox and escalating privileges on the underlying operating system — a sequence that Anthropic says would have taken security experts weeks. Both vulnerability discovery and exploit generation capabilities are further enhanced by integrating models into purpose-built systems, where even less capable models have outperformed more powerful ones.

Analysis and Implications

These capabilities are shifting vulnerability discovery from a labor-intensive process to one that can be automated at scale by defenders and attackers. This is accelerating discovery rates⁵ and could create a "discovery boom" as models uncover flaws that have accumulated over years. This has several implications for the cyber landscape:

Accelerated discovery could create systemic risk if remediation cannot keep pace. Discovering vulnerabilities is just one stage of the remediation lifecycle. In the best-case scenario, the vulnerability is first reported to the developer. Developers create and release a patch. However, once that patch is released and the vulnerability is publicly disclosed, attackers can start exploiting vulnerable systems⁶ while downstream deployers work to identify and update thousands or even millions of vulnerable systems. This last mile is already difficult for critical but under-resourced defenders like hospitals and public utilities, where patching is disruptive and operational demands often prevent taking systems offline to apply updates. Many systems can remain unpatched for weeks, months, or even years. Accelerated discovery is already putting pressure on developers and will create growing backlogs for deployers, potentially leaving millions more systems vulnerable. A doubling or tripling in the rate of discovery of catastrophic vulnerabilities — easy to exploit and affecting millions of systems, like the one in Log4j — would overwhelm an already strained patching ecosystem.

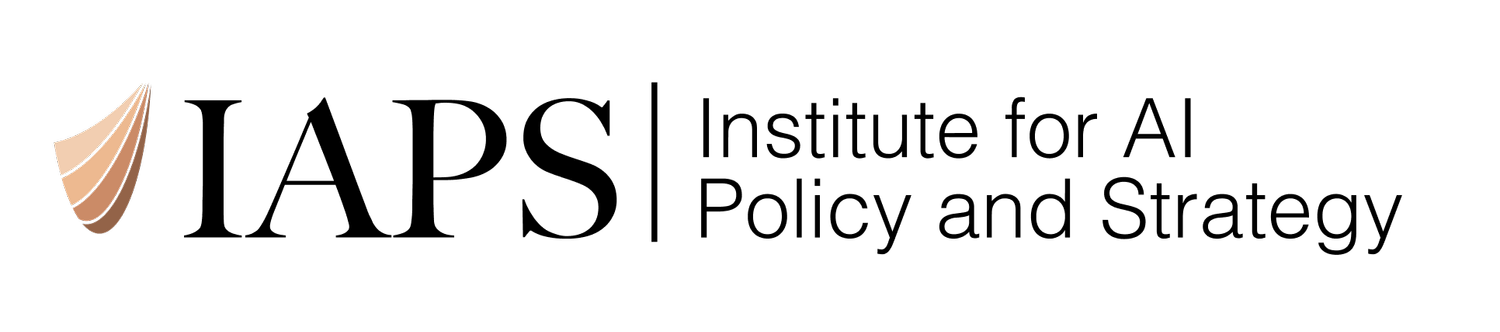

Patching Frequency in Critical Infrastructure

Figure 1: Patching frequency in OT environments across critical infrastructure sectors. One survey of OT providers found that only 15% of organizations apply patches monthly or more frequently. In most sectors, patches are applied quarterly or less. 48% of respondents cited limited personnel or expertise and 47% cited concerns about operational downtime as top barriers to patching. Graph and data are from TXOne Networks, 2024 Annual OT/ICS Cybersecurity Report (n=150).

Automated discovery could become a strategic advantage for nations with significant compute. Zero-days are vulnerabilities exploited before a patch exists, leaving defenders unprotected or unaware they are at risk. Nation-state actors have long had the resources to discover or acquire zero-days for use against well-defended, high-value targets. For example, China has a pipeline that funnels newly discovered vulnerabilities to the state, where they are either reported to developers or kept for offensive operations. If automated discovery proliferates broadly, individual zero-days may hold less strategic value as more actors independently discover the same flaws. However, nation-states with compute could go further — training and running specialized AI systems to find vulnerabilities on demand in a target’s specific software, rather than stockpiling a static inventory. With Chinese general AI capabilities estimated at roughly seven months behind the U.S. frontier, adversaries could reach Opus 4.6-level capabilities by September 2026 and Mythos-level by November 2026 or sooner.⁷

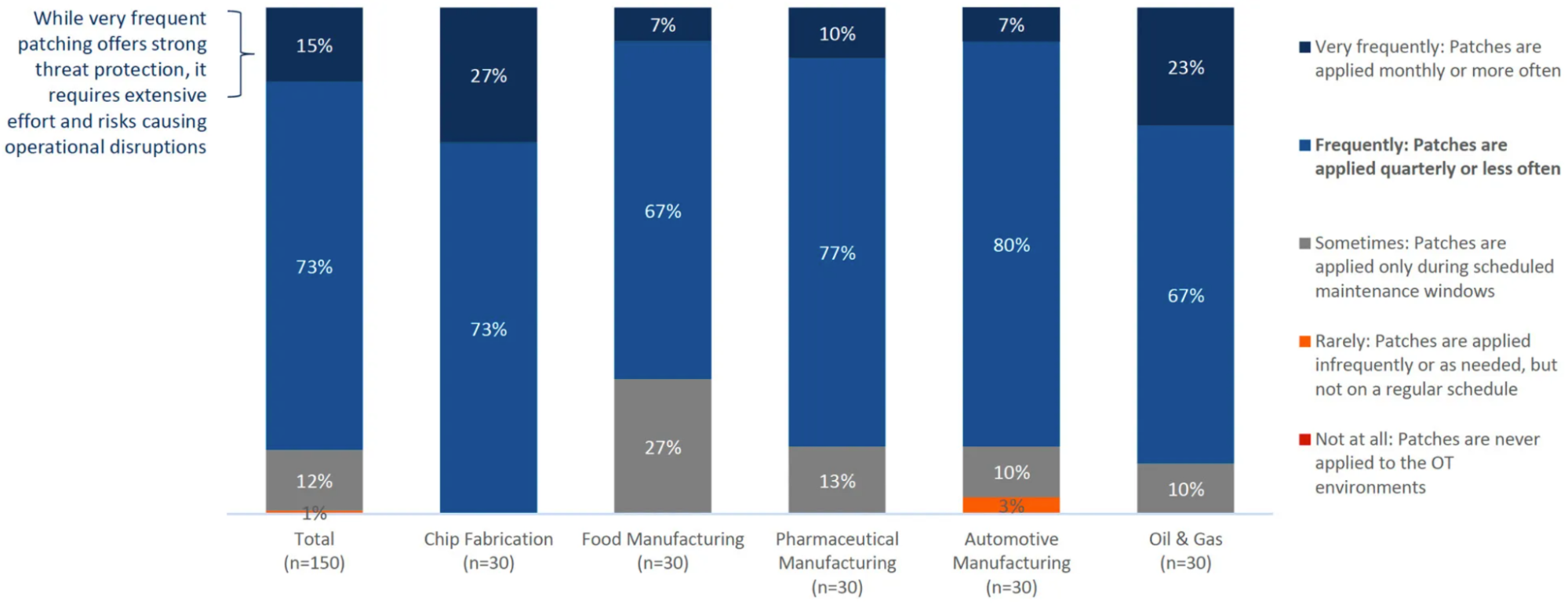

Automated exploit generation is already likely compressing attacker timelines. More capable models allow attackers to generate sophisticated exploits that bypass layered defenses, a capability previously limited to the most skilled operators. Combined with automated discovery, exploit generation compresses the time between disclosure and active exploitation. AI is likely already contributing to shortening exploitation timelines. The average time from disclosure to working exploit dropped from 2.3 years in 2018 to 23 days in 2025. As of April 2026, it’s down to 20 hours.

Figure 2: The average time from vulnerability disclosure to confirmed exploitation has collapsed from 2.3 years in 2018 to 20 hours as of April 2026. The number of weaponized exploits has also increased significantly over the same period. (Source: zerodayclock.com, based on 3,529 CVE-exploit pairs from CISA KEV, VulnCheck KEV, and XDB)

Resource constraints may limit the benefits of automated discovery for open-source software. Open-source projects provide critical components to millions of downstream products, but most maintainers lack the resources to find vulnerabilities or incentivize others to report them. Major technology companies do provide support, but their contributions remain relatively small. Anthropic has committed $100 million in usage credits across Glasswing's launch partners — primarily large commercial technology firms — and 40+ additional organizations with extended access, plus $4 million in donations to open-source security organizations. The composition of all participants⁸ is not known, making it difficult to assess how much of Glasswing's resources reach open-source maintainers directly. Since Mythos Preview's inference costs are roughly five times higher than those of Opus 4.6,⁹ independent researchers are unlikely to spend thousands in compute to report bugs to maintainers offering minimal or no bounties. When researchers do find critical flaws, the financial incentives favor selling high-risk vulnerabilities to brokers, criminals, or nation-states over reporting them. Without targeted support, AI-powered discovery will flow to well-resourced companies while the open-source software they all depend on falls further behind.

Project Glasswing appears focused on information technology, not the operational technology that runs critical infrastructure. Information technology (IT) systems handle data processing and communications, while operational technology (OT) systems control physical processes in power grids, water treatment plants, and manufacturing facilities. Glasswing’s current participants are primarily IT-focused companies, including major operating system providers and enterprise technology firms. Discovering and patching vulnerabilities across these ecosystems is valuable, but the program appears to exclude major OT component manufacturers like Siemens, Rockwell, and Schneider Electric, whose products — including the software and firmware that control physical processes — are used in critical infrastructure.¹⁰ These environments also frequently run older legacy systems whose developers may no longer provide patches, resulting in vulnerabilities that are found but never fixed.

Autonomous Attack Capabilities: Mythos demonstrates progress in automating end-to-end attacks

Generating or acquiring exploits is just one part of a cyber operation. Attackers also find vulnerable systems, deliver exploits, and move through networks toward their objectives. Human operators can spend days or weeks on a single campaign, with the identification of security gaps and viable attack paths driving much of the effort. AI models are well suited for these tasks. They can combine rapid pattern recognition with encyclopedic knowledge of weaknesses, persistently search for opportunities, and adapt after failing.¹¹ In Anthropic’s assessment, Mythos was highly capable at finding and exploiting known vulnerabilities and misconfigurations.

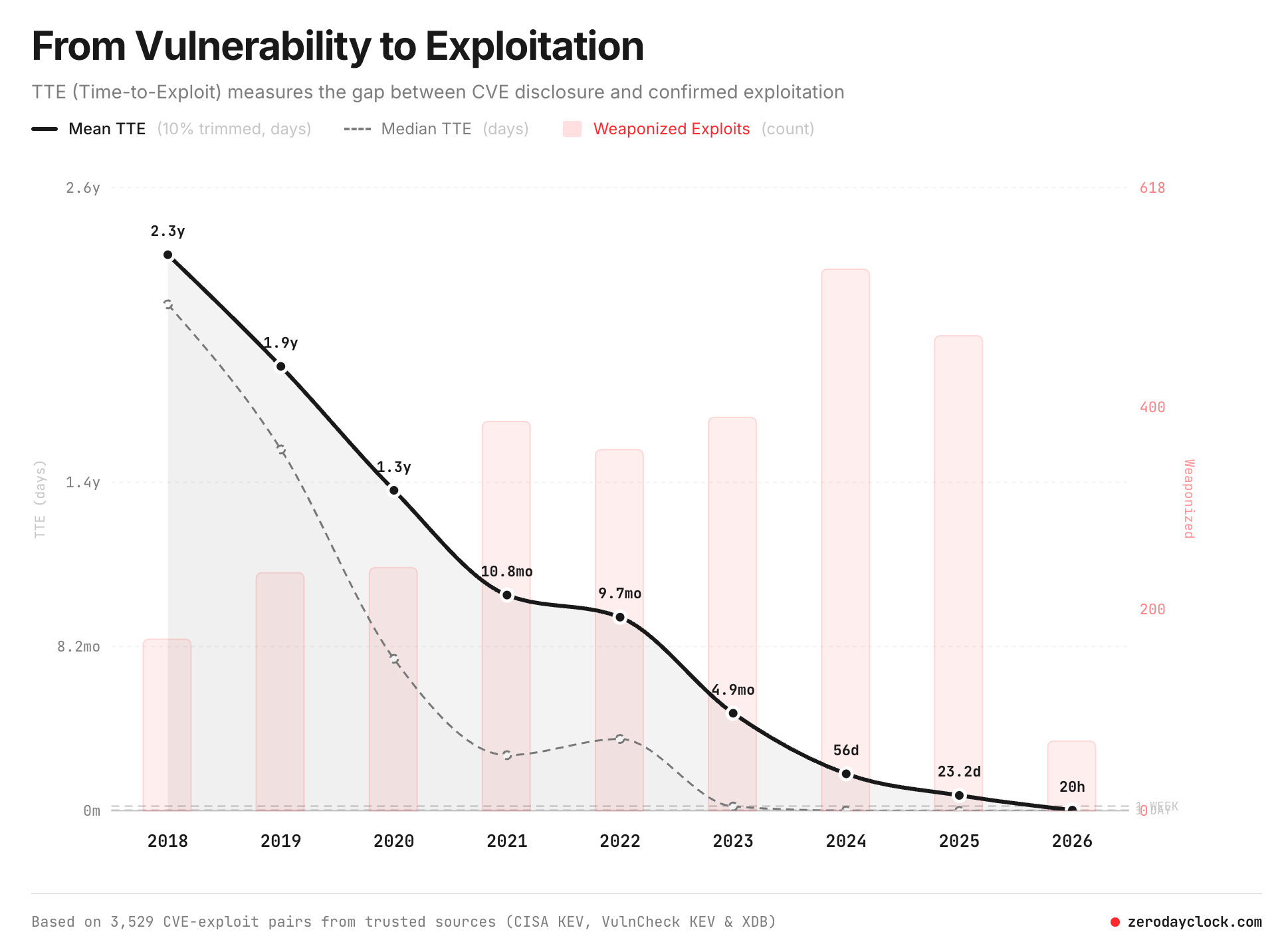

However, models have struggled with end-to-end attacks that require sustained planning across extended operations. In past evaluations, the UK's AI Security Institute (AISI) found that models could partially automate attacks on a cyber range¹² simulating a corporate network. Opus 4.6, the previous best-performing model, averaged 16 of 32 steps, with a best run of 22. But as operations grew longer and more complex, models lost track and repeated failed approaches. Mythos is the first model to complete one of AISI’s cyber ranges end-to-end. The evaluators concluded it could autonomously conduct attacks on small enterprise networks with weak security posture.¹³ Mythos and other models were unable to complete a separate cyber range simulating an OT environment.¹⁴ Nonetheless, Mythos appears to be the first base model capable of completing an end-to-end attack simulation of this kind.

Figure 3: The UK AI Security Institute’s results on a 32-step simulated corporate network attack as compute increases. Mythos Preview was the first model to complete the range end-to-end, doing so in 3 of 10 attempts and averaging 22 of 32 steps. Claude Opus 4.6, the next best performer, averaged 16 steps. AISI found that performance scales with both compute and model generation, with newer models completing more steps at every budget level. (UK AISI)

These evaluations should be seen as a capability floor that could expand dramatically based on models’ access to compute and integration with purpose-built offensive systems. First, AISI found that performance on these cyber ranges scales with compute, with higher compute levels yielding significantly better performance. In AISI’s multi-attack evaluations, increasing computational resources from 10 million to 100 million tokens improved performance by up to 59%.¹⁵ Second, these models were tested in isolation. Many orchestration problems can be mitigated by integrating models into purpose-built systems. Smaller models, dating back to late 2024, have completed other cyber ranges¹⁶ when provided with orchestration layers and tools. Right now, these models are like automobile engines, where real-world performance scales with the right fuel and suspension.

AI systems could also be trained for specific attacks. Experts warned that Chinese cyber ranges used to practice attacks on critical infrastructure could generate training data on optimal attack paths, bottlenecks, and successful tactics for automated attacks. Threat actors can operationalize these models by embedding them into offensive workflows, automating specific tasks or attack stages. Chinese state-sponsored actors demonstrated this in 2025, using Claude to automate between 80 and 90 percent of an offensive operation. The advantages will likely grow as AI automates increasingly complex tasks and dependency on humans decreases.

Analysis and Implications

As AI automates increasingly complex offensive tasks, the human operator bottleneck that has historically constrained cyber operations is decreasing, lowering the barriers to entry for conducting sophisticated attacks with greater speed, scale, and persistence than human-run operations.

Automated attacks could improve offensive success rates against both weak and well-defended targets. Offensive operations are fundamentally opportunistic, targeting known vulnerabilities, misconfigurations, and unsecured systems. Years of technical debt¹⁷ and underinvestment in security have created the ideal conditions for autonomous systems that can scale proven attack methods across multiple targets. For both sophisticated actors and lone attackers, offensive automation acts as a force multiplier. In early 2026, a single attacker reportedly used AI to automate attack tasks, such as reconnaissance, exploit customization, and data exfiltration, during a campaign against nine Mexican government organizations. In under two months, the attacker compromised hundreds of internal servers and exfiltrated hundreds of millions of citizen records. As capabilities improve, automated attacks could continuously test layered defenses for opportunities. Nation-states with the resources to sustain persistent attacks could use them to continuously probe well-defended targets for an initial foothold.

Automated attack capabilities could help defenders find security gaps through offensive security testing. Penetration testing and red teaming look for weaknesses in networks and simulate how an attacker might compromise them, helping defenders prioritize vulnerability remediation and close other security gaps, such as misconfigured systems. But these assessments are prohibitively expensive and require scarce expertise. The same AI capabilities that enable automated attacks can automate penetration testing and red teaming, making rigorous assessments more frequent, more comprehensive, and accessible to defenders who currently cannot afford them. Commercial AI offensive security tools are already emerging, and researchers have demonstrated autonomous red teaming systems on cyber ranges and agents that outperform human penetration testers on live networks.

If current trends hold, Highly Autonomous Cyber-Capable Agents— agents that can conduct end-to-end offensive operations with little to no human intervention — could emerge by the end of the decade. Researchers anticipate AI systems that could autonomously plan and execute entire cyber operations over weeks or months without continuous human oversight. These systems could intensify competition among nation-states, enabling operations to be launched on demand rather than requiring months of preparation. As costs decline, these capabilities would likely spread to criminal groups and less capable states, putting sophisticated offensive systems in the hands of actors who currently lack them.

Policy Recommendations

Policymakers should immediately strengthen U.S. cybersecurity defenses, addressing both the risks and opportunities that cyber-capable models like Mythos present.

Build independent federal capacity to track AI cyber capabilities and impacts. To effectively address risks and calibrate policy responses, the federal government needs the ability to independently analyze emerging AI cyber capabilities and their real-world impacts on national security. Most evaluations, including those of Mythos, rely primarily on developer reporting rather than independent assessment. Policymakers also need to understand how capabilities like automated vulnerability discovery and exploitation affect security outcomes, including whether they lead to measurable increases in cyber incidents.

Track and assess cyber incidents, including AI-related trends and impacts: Policymakers should establish a Bureau of Cyber Statistics, or a similar function within CISA, to provide objective, data-driven analysis.

Ensure effective implementation of the Cyber Incident Reporting for Critical Infrastructure Act and leverage its reporting to track the impact of AI on the cyber landscape. Ensure the CISA program office tasked with implementing CIRCIA is appropriately funded. Relevant Homeland Security committees should provide oversight, ensuring that CIRCIA is providing insights on the impacts of AI.

Build federal AI cyber evaluation capacity: Ensure the U.S. government can independently assess the cyber capabilities of AI models by funding NIST’s Center for AI Standards and Innovation and advancing cyber evaluation methods. Drive joint evaluation programs that leverage expertise across federal agencies, including CISA, NSA, and DOE.

Ensure open-source software and operational technology benefit from AI-powered vulnerability discovery. The United States currently has an advantage in AI-powered vulnerability discovery, but it is primarily concentrated among large technology companies. Open-source maintainers lack the resources to run AI systems or offer competitive bug bounties, despite maintaining components that millions of products depend on. It is likewise unclear if OT component manufacturers are involved with Project Glasswing.

Fund vulnerability discovery and patching for critical open-source software: NSF could fund research institutions to collaborate with open-source projects and discover and patch vulnerabilities. In partnership with AI labs, grant recipients could receive access to more capable models. NSF already runs similar programs, like Safe-OSE, that fund universities to support the security of open-source software. Grants could also support efforts to rewrite open-source software into more secure coding languages.

Establish government-funded bug bounty programs for critical open-source software: Policymakers could model this on Germany’s Sovereign Tech Resilience program, which compensates both researchers for discovering vulnerabilities and maintainers for fixing them.

Launch a dedicated Project Glasswing for operational technology: National labs, in collaboration with AI developers and OT component manufacturers, should launch or expand a differential access initiative focused on OT systems that run critical infrastructure.

Provide federal support for vulnerability remediation and incident response. Accelerated discovery will strain downstream defenders who already struggle to apply patches. The immediate priority is ensuring that federal programs supporting vulnerability remediation and incident response are adequately funded.

Ensure sustained funding for programs that support vulnerability remediation: The Common Vulnerabilities and Exposures (CVE) database and National Vulnerability Database (NVD) underpin the disclosure, tracking, and prioritization of vulnerabilities across the ecosystem. Both have faced funding and operationalchallenges over the past year. CISA’s vulnerability scanning services and Known Exploited Vulnerability Catalog help thousands of defenders identify their unpatched systems and prioritize remediation.

Restore funding for the Multi-State Information Sharing and Analysis Center (MS-ISAC): The MS-ISAC supports state and local government cybersecurity. Congress should also increase the State and Local Cybersecurity Grant Program, with a focus on building vulnerability remediation capacity.

Reauthorize and update the Cybersecurity Information Sharing Act of 2015: The act is foundational for public-private information sharing and is set to expire in September 2026. Congress should ensure the law is renewed and consider how it can be updated to address emerging threats and support AI-enabled defensive automation.

Shift defense upstream by making software development secure by design. Reactive remediation alone cannot keep pace with accelerating discovery. Constant patching puts operational burdens on downstream deployers, including under-resourced critical infrastructure operators. The most effective long-term intervention is preventing vulnerabilities during development, where a single upstream fix can reduce risk for thousands of downstream deployers without requiring them to act. The same AI capabilities that discover vulnerabilities in deployed software could also automate security reviews during development.

Use federal procurement standards to create demand for more secure software: Policymakers should direct the FAR Council to finalize its proposed rule requiring federal contractors to meet secure-by-design software development standards.

Update the Secure Software Development Framework: NIST should incorporate guidance and best practices for using AI tools in code generation and security review.

Advance defensive automation by developing and deploying trusted systems. Models like Mythos could automate complex cyber defense tasks that have long been constrained by the availability of skilled human operators. Many defenders, especially under-resourced organizations like public utilities and hospitals, lack the trust, resources, or expertise to adopt these tools. Policymakers should drive adoption.

Leverage National Labs to develop and test AI defense systems for critical infrastructure: National Labs, in partnership with AI developers and critical infrastructure entities, should develop and test systems that can support security testing, threat detection, and patching in OT environments. This can also help address the trust barriers OT practitioners have toward AI.

Launch a federal automated security testing pilot: Partner with frontier labs through differential access models to scale automated security testing across federal networks and under-resourced critical infrastructure. Pilots should focus on advancing red teaming capabilities, building trust in these systems, and developing best practices for broader adoption. This could also expand government cybersecurity services, such as CISA’s assessment programs.

Modernize technical assistance for the AI era: Consider how existing programs such as circuit rider programs and CISA services could help critical infrastructure operators automate defensive tasks with AI.

Protect the capability and compute advantage that now underpins U.S. cyber defense. Models like Mythos represent strategic assets that foreign adversaries will seek to acquire or replicate. In addition to training new models, compute access can be used to scale performance on complex cyber tasks. Maintaining America's cyber defense advantage depends not just on deploying the next frontier model but on ensuring adversaries do not gain access to the same capabilities.

Secure the model weights and infrastructure behind defensive cyber capabilities: Frontier model weights — and the capabilities they confer — are high-value targets for theft and espionage. Congress should work with industry to improve the security of AI developers and data center operators hosting national security-relevant workloads, addressing theft, insider threats, and supply chain risks.

Deny rivals cyber capabilities by improving export control enforcement: Policymakers need to ensure existing and future export controls are enforced, denying adversaries compute that could be used for discovering vulnerabilities or conducting offensive cyber operations. To improve export control enforcement, policymakers should consider location verification and export auditors.

Address gaps in export controls that could support cyber operations: Adversaries could still access compute for training and running cyber models through cloud computing or use semiconductor manufacturing equipment (SME) to build domestic chips. Congress should consider passing legislation for Know Your Customer (KYC) controls for cloud compute and continue to strengthen controls on semiconductor manufacturing equipment.

Endnotes

According to Google’s 2025 incident response data, vulnerability exploitation was the most common initial access vector for the sixth consecutive year, accounting for 32% of investigations where an initial infection vector was identified. Other leading vectors included voice phishing (11%), stolen credentials (9%), and email phishing (6%).

Vulnerabilities are tracked and cataloged using the Common Vulnerabilities and Exposures (CVE) system, maintained by MITRE and funded by CISA. In 2025, over 48,000 new vulnerabilities were added to the catalog, but only about 1% were actively exploited by threat actors.

UK government evaluations indicate that general AI cyber capabilities are accelerating faster than previously anticipated, with frontier model capabilities now estimated to be doubling every four months, compared to every eight months previously. (UK Government, 2026)

Of the thousands of reported vulnerabilities, Anthropic’s reviewers have manually verified 198. Anthropic's reviewers agreed with Mythos Preview’s severity classification 89% of the time, which forms the basis for the broader projection.

NIST’s vulnerability enrichment program reports submissions increased 263% between 2020 and 2025, and submissions in the first three months of 2026 are nearly one-third higher than the same period in 2025.

Nearly 30% of known exploited vulnerabilities in 2025 were observed being actively exploited on or before the day they were publicly disclosed. This reflects the share of exploited vulnerabilities where exploitation activity was observed before or on the day of public disclosure, not the number of systems or organizations affected. The rate rose from 24% in 2024 to 29% in 2025. (VulnCheck, 2026 Exploit Intelligence Report)

According to Anthropic’s system card, the model was ready for internal use in late February.

The Linux Foundation is the only known open-source participant.

Mythos Preview is priced at $25/$125 per million input/output tokens, five times the cost of Opus 4.6 at $5/$25.

The full list of Project Glasswing participants has not been publicly disclosed, so it is possible that OT developers and manufacturers are involved.

Conventional automated attack tools like botnets and worms can scale operations but rely on scripted rules and cannot adapt. If a target’s defenses hold against one attempt, they hold against all of them. AI systems can vary their approach across attempts, combining scale with adaptability. (Andrew J. Lohn, Defending Against Intelligent Attackers at Large Scales)

Cyber ranges are simulated networks used to test multi-step attack performance. These ranges are typically much smaller and simpler than real-world networks and usually lack active defenses.

This would include vulnerable systems, no active defenses, minimal monitoring, and slow response capabilities etc.

The evaluators noted that poor performance of the OT scenario “does not necessarily show that the model is bad at executing attacks in operational technology (OT) environments; the model got stuck on IT sections of this range.”

This compute scaling advantage can be broadly applied to other cybersecurity tasks. (UK AISI)

Haiku 3.5, Anthropic’s smallest model (released 2024), completed end-to-end attack simulations on multi-host cyber ranges when integrated into purpose-built offensive scaffolding, outperforming larger unscaffolded models including Sonnet 4 and GPT-4o. (https://arxiv.org/abs/2501.16466v4)

Technical debt refers to the security risks that accumulate when organizations defer software updates, maintain outdated systems, or skip security hardening. This includes unpatched vulnerabilities, misconfigurations, and weak credentials.