Risk Reporting for Developers’ Internal AI Model Use

This report was co-authored by Sambhav Maheshwari, Joe O’Brien, Theo Bearman, and Oliver Guest.

Frontier AI companies first deploy their most advanced models internally, for weeks or months of safety testing, evaluation, and iteration, before a possible public release. For example, Anthropic recently developed a new class of model with advanced cyber offense-relevant capabilities, Mythos Preview, which was available internally for at least six weeks before it was publicly announced. This internal use creates risks that external deployment frameworks may fail to address.

Legal frameworks, notably California's Transparency in Frontier Artificial Intelligence Act (SB 53), New York's Responsible AI Safety And Education (RAISE) Act, and the EU's General-Purpose AI Code of Practice, all discuss risks from internal AI use. They require frontier developers to make and implement plans for how to manage risks from internal use, and to produce internal use risk reports describing their safeguards and any residual risks. This guide provides a harmonized standard for companies to produce internal use risk reports suitable for all three regulatory frameworks. It is addressed primarily to evaluation and safety teams at frontier AI developers, and secondarily to regulators and auditors seeking to understand what good reporting looks like.

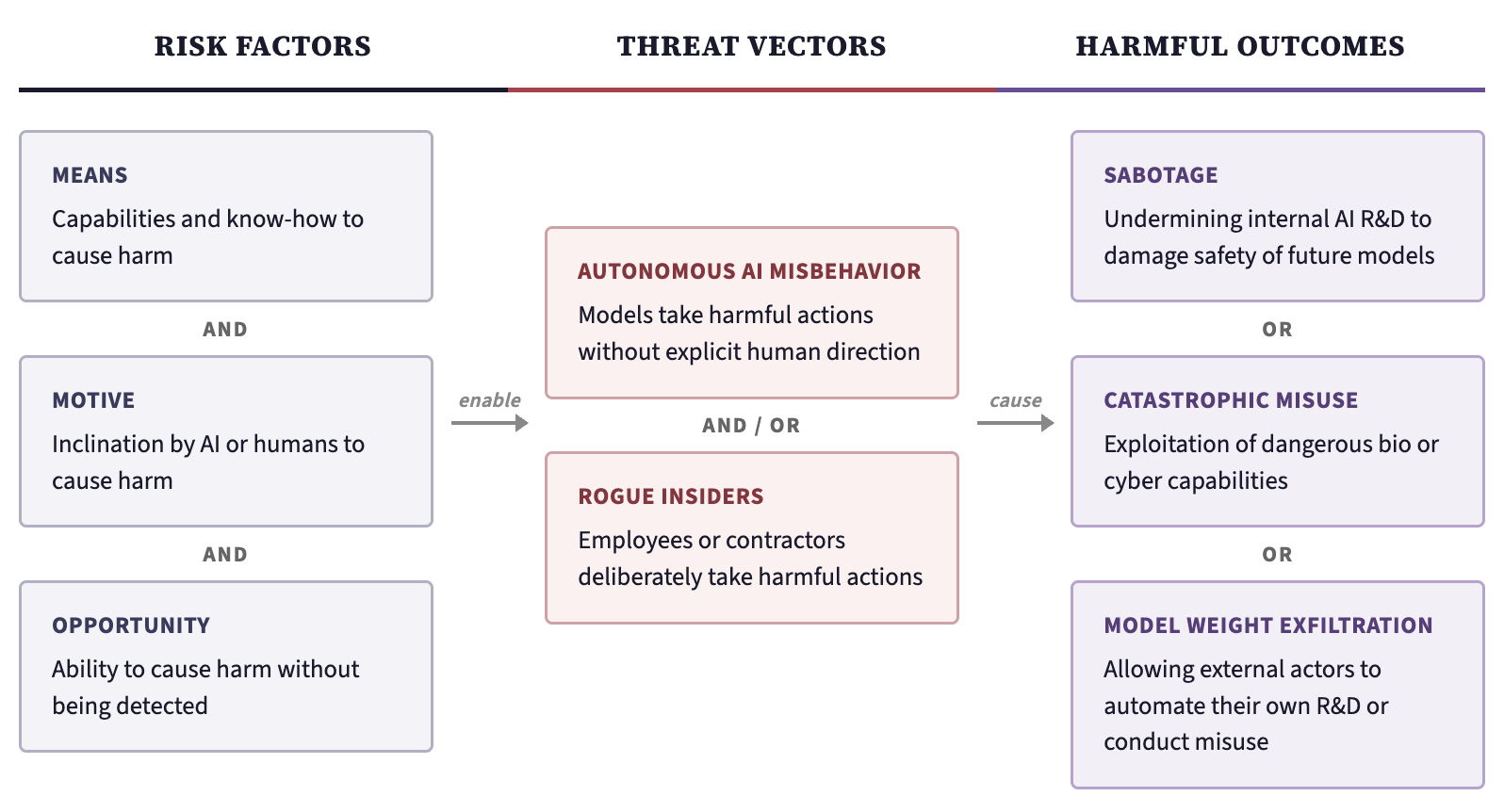

Figure 1: Risk taxonomy for internal AI model use. The conjunction of all three risk factors (left) and one or both threat vectors (center) can lead to any of the harmful outcomes (right).

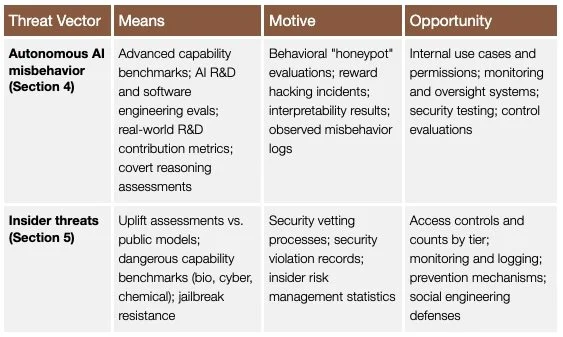

Figure 1 summarizes the risk landscape for internal AI models. Given the pace of AI R&D automation and the limited external visibility into how companies use their most capable models internally, regular and detailed risk reporting may be one of the few mechanisms available to ensure that the risks from internal AI use are identified and managed before they materialize. Whenever a substantially more capable or riskier model is deployed internally, the developer should create a risk report and argue why the model is safe to deploy. Table 1 summarizes some key indicators the report should include for each combination of threat vector and risk factor.

Table 1: Key internal use risk indicators to report. The reporting focus can generally be limited to the most capable or highest risk internal model. Insider threat indicators (bottom row) are primarily intended for confidential submission to regulators rather than public summary.