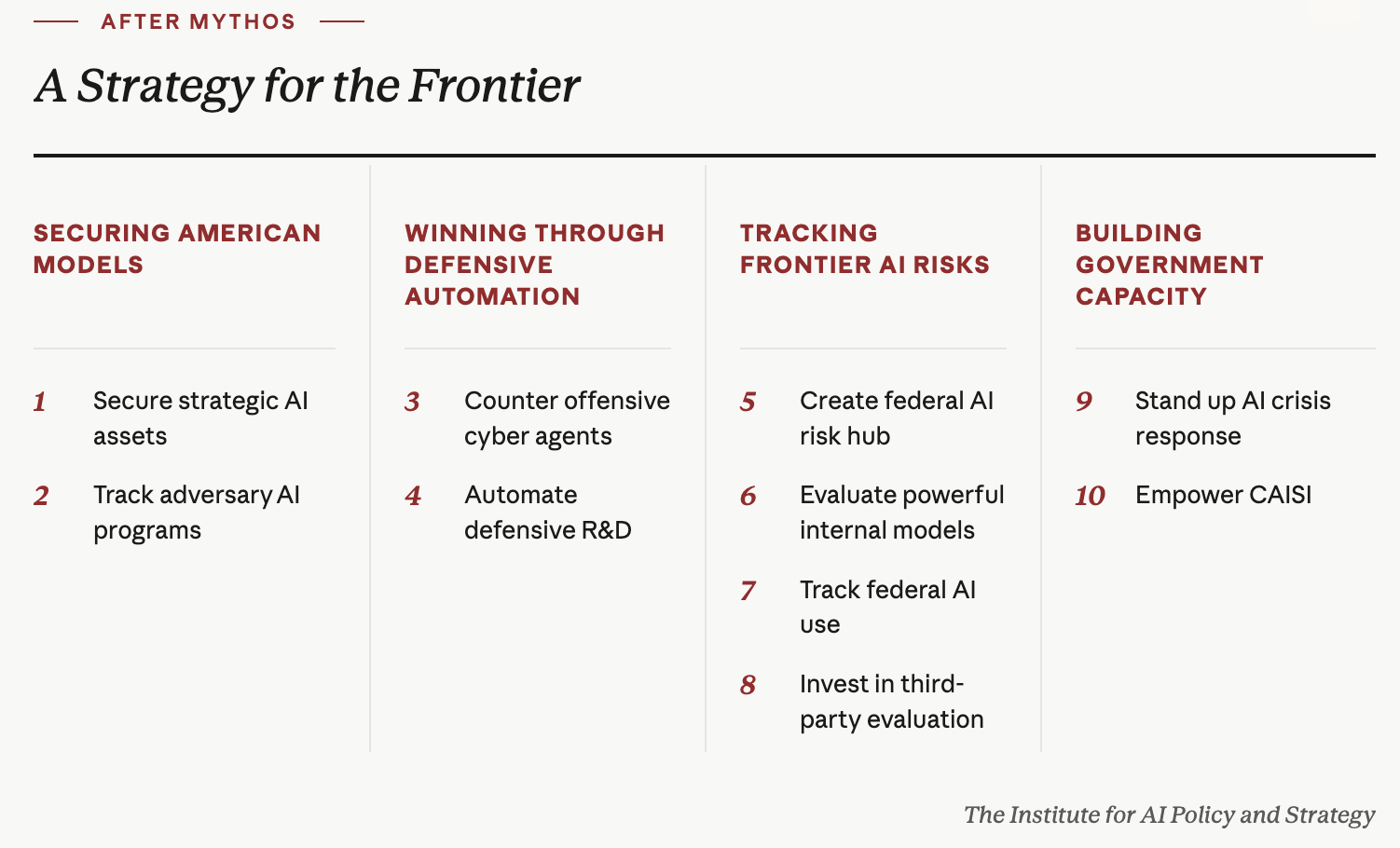

After Mythos: A National Security Playbook for Frontier AI

This memo was co-authored by Brianna Rosen & Christopher Covino.

The White House is weighing a range of executive actions to address the national security risks that rapid advances in frontier AI pose. Anthropic’s recent disclosure that its Mythos Preview model demonstrated cyber capabilities it judged too dangerous to release publicly has sharpened the case for government action–but Mythos is one signal in a broader trajectory. Estimated model performance is doubling every four months, and OpenAI’s GPT-5.5 already has reportedly demonstrated similar cyber capabilities. Risks beyond cyber, including biosecurity threats, are likely to emerge without additional safeguards. A strategic policy response must look beyond the Mythos moment to secure models against adversaries, drive defense through automation, expand public-private information sharing, and build government capacity. These interventions will help create the enabling environment for policy responses to match the scale of frontier risks while promoting secure innovation.

Securing American Models

1) Secure strategic AI assets such as model weights against adversarial theft and sabotage. The most advanced frontier AI systems require protection commensurate with national security risks, yet the current security posture of most frontier companies leaves them vulnerable to exploitation by highly capable state and criminal actors. Unauthorized access to model weights, algorithmic insights, training data, and physical infrastructure could allow adversaries to replicate U.S. capabilities, compress R&D timelines, or exploit vulnerabilities in deployed systems.

Recommended Policy Actions

The White House should coordinate with the Pentagon, Intelligence Community (IC), and the National Institute of Standards and Technology’s Center for AI Standards and Innovation (CAISI) to accelerate the development of technical standards for high-security AI datacenters, including by prioritizing R&D on confidential computing and tamper-resistant hardware.

The above agencies should coordinate with industry to launch a small-scale secure inference pilot to test the feasibility and cost of implementing advanced security measures.

The National Security Agency (NSA), through its AI Security Center, should extend its existing industry partnership model to frontier AI developers, data centers, and other infrastructure housing frontier model weights for voluntary threat detection and analysis.

2) Expand intelligence insights into adversarial development and use of frontier AI. It is only a matter of time before U.S. adversaries have Mythos-level capabilities, and China’s AI ecosystem is roughly seven to eight months behind the U.S. frontier. Monitoring this gap will require sustained intelligence collection and an analytic framework to translate insights into early warning systems.

Recommended Policy Actions

As a top-tier National Intelligence Priorities Framework priority, the Office of the Director of National Intelligence (ODNI)’s National Intelligence Manager for Emerging and Disruptive Technologies should stand up a dedicated capability to track adversary compute infrastructure, model capabilities and R&D pipelines, technical talent flows, and strategic AI doctrine.

The ODNI, in coordination with CAISI and the National Cyber Director, should develop an AI-specific indicators and warning framework covering observable metrics to indicate when frontier capabilities are approaching thresholds of national security concern. In coordination with the NSC, indicators should be tied to defined response triggers, such as stronger export controls or increased investment in defensive automation.

Winning Through Defensive Automation

3) Accelerate defense against AI-enabled cyber operations by scaling defensive automation and building infrastructure to detect and disrupt offensive cyber agents. As AI capabilities advance and attackers gain asymmetric advantages in accelerated vulnerability discovery, defenders will likely be slower to adapt absent sustained government support.

Recommended Policy Actions

The Cybersecurity and Infrastructure Security Agency (CISA) and NSA should lead a joint, iterative operational pilot that uses AI systems to scale penetration testing and red teaming on federal networks.

The Department of Energy (DOE) national labs should partner with AI developers and critical infrastructure operators to develop, test, and deploy AI systems for risk-based vulnerability management, remediation, and threat detection in operational technology environments for critical infrastructure.

ODNI should work with industry to establish an Agentic Cybersecurity Exchange composed of major model and cloud providers to detect and disrupt offensive cyber agents, similar to the Global Signal Exchange.

4) Automate defensive R&D across high-priority safety and security domains. Fully automated researchers may exist within several years; this could significantly accelerate scientific discovery, including the development of more capable AI models. Without deliberate effort to accelerate safety-enabling technologies in parallel, dangerous AI capabilities could outpace the defenses needed to manage them.

Recommended Policy Actions

The White House should secure voluntary commitments from AI companies to dedicate a portion of compute to automating safety and security R&D that addresses externalities of their own systems. This R&D should include both traditional AI safety work (robustness, control, interpretability), and a flexible portfolio focused on societal resilience in cybersecurity, biosecurity, and other priority domains.

The DOE should procure dedicated inference compute and negotiate frontier model access agreements with AI companies for government-run safety and security research in secure environments.

Tracking Frontier AI Risks

5) Create a central federal hub for AI risk information sharing across the IC, CAISI, and defender agencies. Defenders need fast, secure threat information to patch vulnerabilities before attackers exploit them. Differential access approaches like Anthropic’s Project Glasswing for Mythos give defenders an advantage by limiting model access, but only when paired with robust interagency information-sharing mechanisms that allow for timely vulnerability patching.

Recommended Policy Actions

The White House should designate the ODNI as a federal fusion point for AI risk information, working with CAISI. The ODNI is the natural hub for collecting classified threat intelligence; CAISI is better positioned for insights into industry-risk reporting and third-party evaluations. Robust integration of these data streams would allow the ODNI to share more actionable threat intelligence with CISA and other defender agencies.

The ODNI should build dedicated dissemination channels at appropriate classification levels, modeled on the Critical Infrastructure Intelligence Initiative, to deliver timely threat information to Chief AI Officers across federal agencies and to industry.

6) Expand secure public-private information-sharing mechanisms to increase government insight into commercial security protocols around advanced internal models. Frontier AI companies typically deploy their most advanced models internally before (or instead of) external release. Because internal models often exceed publicly-released models in capability, they may present outsized risks of loss-of-control, sabotage, or misuse. Yet testing of these internal models is voluntary and uneven, presenting clear national security risks.

Recommended Policy Actions

CAISI should work with frontier developers to negotiate agreements to conduct evaluations on internal models. Existing evaluation agreements appear to be designed exclusively for models that companies intend to release publicly (based on “pre-deployment” evaluation agreements), which may fail to capture the most powerful models within companies.

7) Provide agency-specific guidance on agent identifiers to improve tracking and monitoring of federal AI use. Agent identifiers would enable federal agencies to assign agent-specific permissions and access controls, monitor agent activity and usage once deployed, better detect malicious use and offensive cyber agents, and remediate when agents fail or are compromised.

Recommended Policy Actions

The White House Office of Management and Budget, in coordination with NIST and CISA, should prepare agent identifier use and implementation guidelines for federal agencies. The ODNI and the Pentagon should prepare agent identifier use and implementation guidelines, and implement agent identifiers, within their agencies’ networks.

CISA, in coordination with NIST, should develop federal-specific technical standards for agent identifier interoperability, logging, and revocation.

8) Invest in evaluation science and a robust third-party evaluation ecosystem. Evaluations are a core tool for measuring model risks and the foundation for any pre-deployment governance scheme. But the field faces significant challenges, notably that models increasingly demonstrate awareness of evaluations, benchmarks saturate as fast as capabilities advance, scaffolding effects are routinely underweighted for real-world impacts, and existing methods cannot adequately measure model propensity or safeguard efficacy.

Recommended Policy Actions

Invest in evaluation R&D through CAISI, DARPA (including expansion of the AI FORGE program and new initiatives addressing evaluation awareness, propensity evaluations, and safeguard testing), and DOE national labs.

Support an ecosystem of Independent Verification Organizations (IVOs), including a federal accreditation framework for third-party auditors of frontier model developers, and establish model access standards for IVOs as part of a larger effort to institutionalize pre-deployment testing. Accreditation could be required for regulatory or procurement purposes, or serve as a quality signal in an open market for third-party evaluations.

Building Government Capacity

9) Strengthen the federal government’s ability to prevent and respond to AI-enabled crises.Effectively mitigating risks from frontier AI will require robust incident response planning, coordination across federal, state, and private-sector actors, and expanding the federal government’s in-house talent pipeline.

Recommended Policy Actions

Establish a National AI Reserve Corps of pre-vetted, cleared experts who can be activated as Special Government Employees, supported by expedited clearance pathways, dedicated hiring authorities, and contract-based surge mechanisms.

Establish a Rapid Emergency Assessment Council for Threats (REACT) to be activated when defined AI incident severity thresholds are crossed, with authority to rapidly convene pre-vetted external experts from the National AI Reserve Corps to assess threats and response plans.

10) Empower CAISI to meet the national security demands of frontier AI.CAISI concentrates the federal government’s frontier AI technical expertise, but lacks the statutory authority and resources to match its mission and complement the role of the IC.

Recommended Policy Actions

The White House should take the following steps:

Work with Congress to authorize CAISI under statute and appropriate at least $84 million annually to fulfill the purposes laid out in the AI Action Plan and other administration directives.

Direct CAISI to expand its evaluation and testing remit to include loss-of-control risks, AI R&D automation, and safeguard efficacy, with resources to negotiate voluntary agreements with developers in these domains.

Task CAISI with developing post-deployment monitoring standards for high-stakes deployments, such as logging requirements for agentic systems, misuse detection in cybersecurity and biosecurity contexts, and control measures for autonomous agents operating in federal systems and critical infrastructure.

Leverage the United Kingdom’s AI Security Institute (AISI) as a resource multiplier through enhanced CAISI-AISI information sharing, joint evaluations of frontier models, and talent exchanges.